What to Do When You Lose Logs with Kubernetes

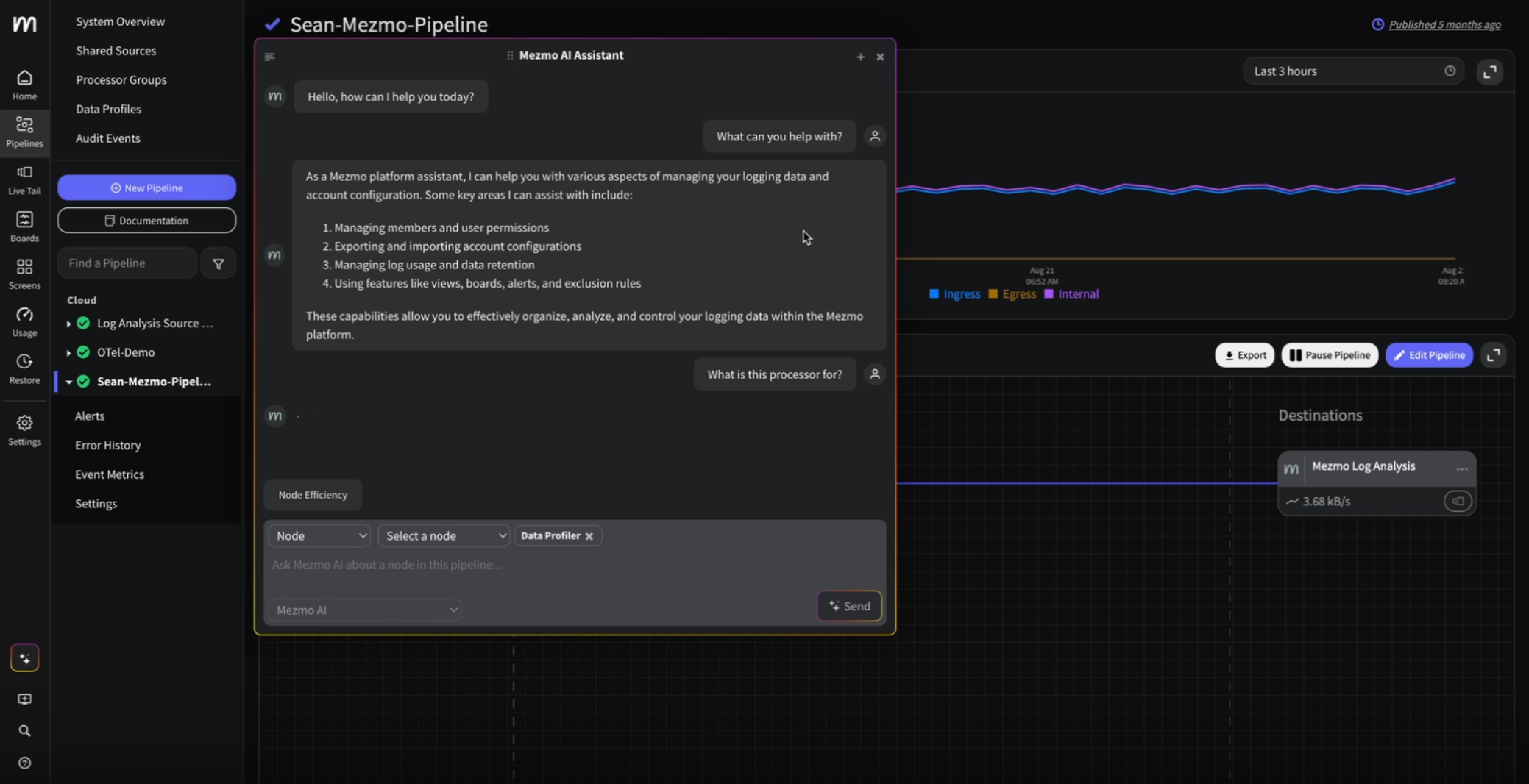

Kubernetes has fundamentally changed the way we manage our production environments. The ability to quickly bring up infrastructure on demand is a beautiful thing, but along with it brings some complexity, especially when it comes to logging. Logging is always an important part of maintaining a solid running infrastructure, but even more so with Kubernetes. Since Kubernetes clusters are constantly being spun up and spun down making sure logging functions correctly is critical. With Mezmo, formerly known as LogDNA, we make it extremely easy for you to get the job done when it comes to logging, but getting log data from these complex environments can be a pain point for some.

From a support perspective, we see a few common problems that tend to arise with Kubernetes deployments where users might fail to see logs coming in late or coming in at all. Before we dive into that, here's a little background on how Mezmo actually works within the Kubernetes environment.

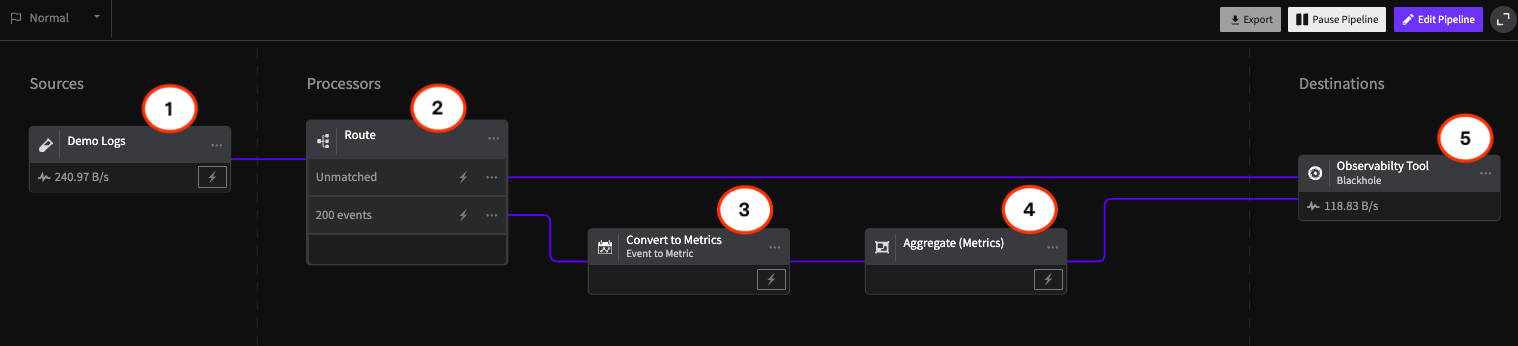

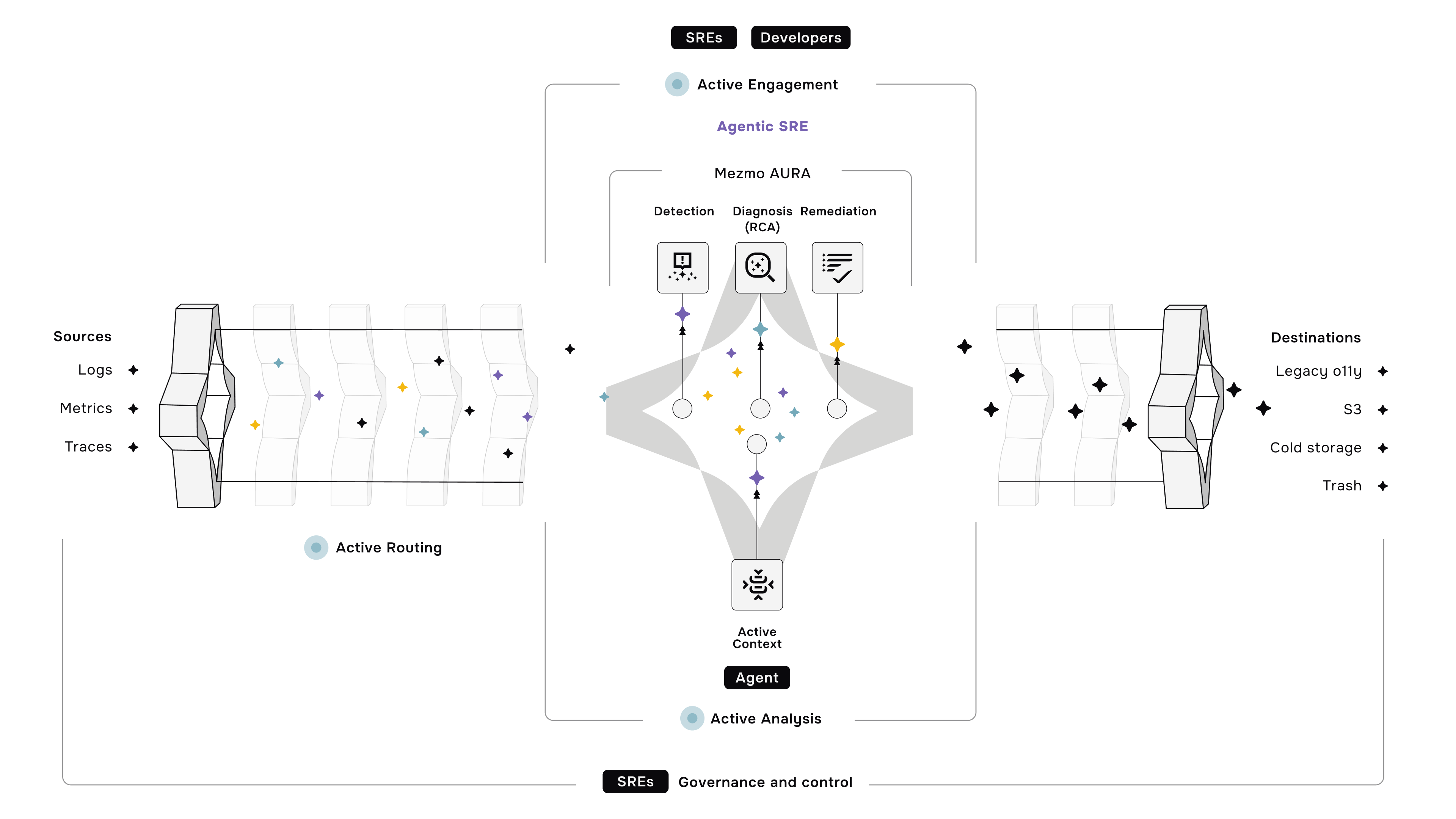

When you deploy Mezmo into your Kubernetes infrastructure, the agent actually runs as a pod on each of the nodes and ships logs directly from the STDOUT/STDERR of your containers and application logs. By default, we collect these logs from all of your namespaces. This centralized approach helps maintain a resilient telemetry pipeline that captures application, infrastructure, and security logs across distributed components.

Now that you have a basic idea of how Mezmo handles your Kubernetes logs, we can dig into a few common problems that tend to arise within these deployments and the best practices for helping reduce the risk of these problems occurring.

Networking Issues

Because Kubernetes is a distributed system, proper networking and communication are critical. When communication on the networking level is disrupted, not only do the applications suffer but logging for these applications can be interrupted as well, reducing insight into your infrastructure.

The symptoms of networking issues can look like:

- A master node not able to connect with other nodes.

- Nodes not being able to communicate with each other

- Frequent timeouts within the nodes themselves, interrupting communication between the node and the pods running on the node.

Surprisingly, this is a common issue that arises and though it might strike anxiety in most, your logs are not being dropped as our Mezmo agent is robust when it comes to handling scenarios like this. This ensures your telemetry pipeline stays intact and avoids gaps in critical compliance or incident data during outages.

This guide is a good resource for learning Kubernetes networking, how to find your cluster IPs, service IPs, pod network/namespace, cluster DNS and more.

Deployment Configuration

Another common issue we see is container or application logs not being picked up correctly or picked up at all. There can be multiple reasons why you might not see your logs, but it most likely comes down to the configuration of your deployment.

First, make sure your logs are being written to the proper directory. By default, Mezmo's agent reads from /var/log. Your applications may be writing logs to a different directory causing you to wonder why logs are not showing up. This can be easily resolved in two ways. One way is to simply have your application write logs directly to /var/log.

Another way, which is more efficient, is to modify your YAML configuration to actively pick up that particular directory that your application is writing to and make sure that directory is written to STDOUT. Remember that if your container isn’t writing to STDOUT and you modify the config to read from the new directory, your application logs won’t get picked up.

Advanced users might also define custom grok patterns in their pipeline to normalize unstructured logs, extract key fields, and enable more accurate alerts and visualization. This is particularly helpful when applying alerting or analytics downstream.

Next Steps

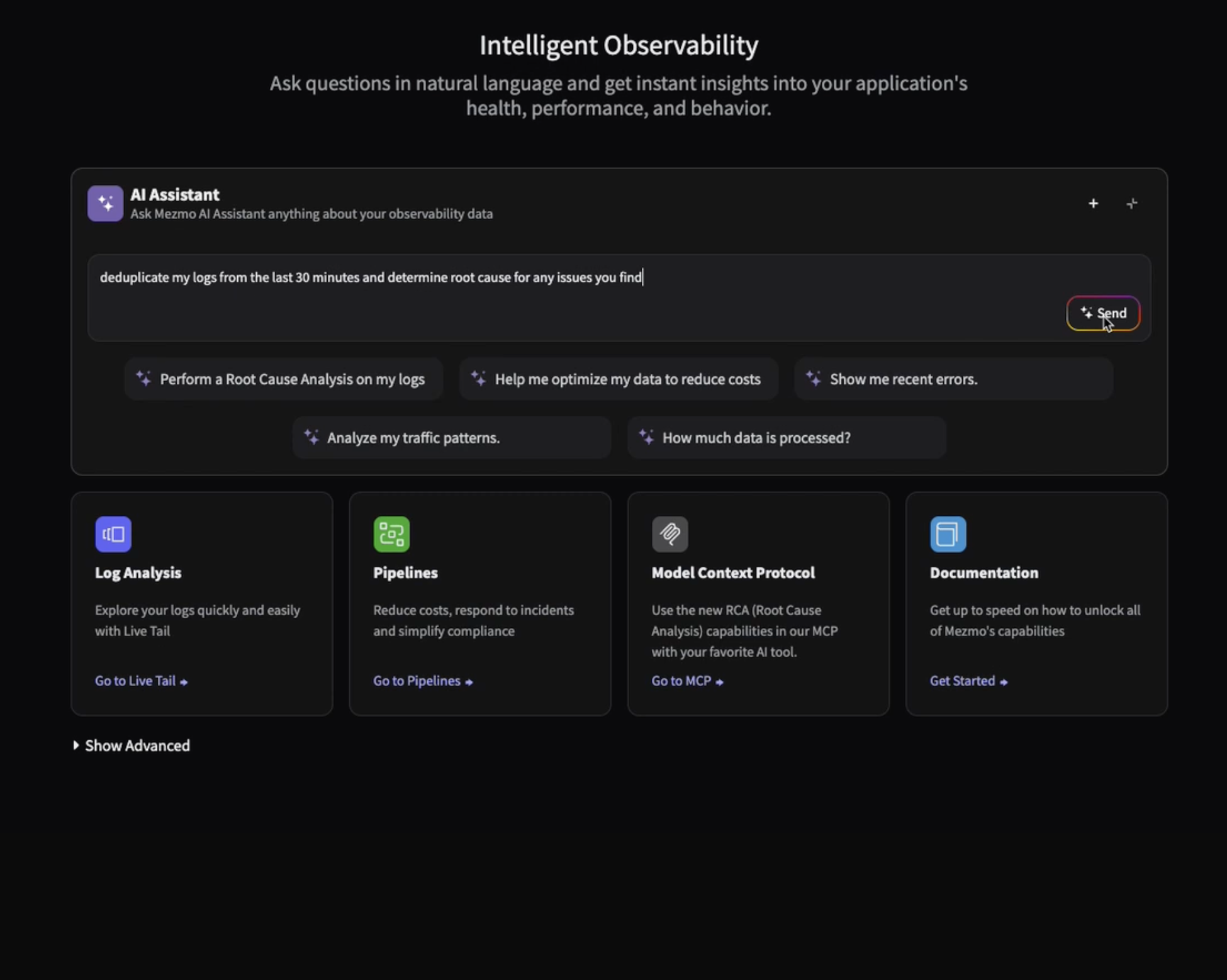

For more best practices on how to set up Kubernetes for logging, check out our webinar recap. Issues do arise within a Kubernetes deployment that if not taken care of can become bigger bottlenecks. Losing logs that give insight to issues should not be your problem.

This includes poor log rotation practices, which can silently cause disk pressure or lead to long-term data loss. Be sure to implement and enforce log rotation policies across all nodes and containers.

This write up was created by the Mezmo support team in an effort to help you identify, investigate and resolve the most common problems we see occur with customer deployments.

.png)

.jpg)

.png)