Logging with Kubernetes Should Not Be This Painful

LogDNA is now Mezmo but the product you know and love is here to stay.

Before reading this article, we recommend having a basic working knowledge of logging with kubernetes. Check out our previous article, Kubernetes in a nutshell, for a brief introduction.

Logging with Kubernetes with Elasticsearch stack is free, right?

If the goal of using Kubernetes is to automate management of your production infrastructure, then centralized logging is almost certainly a part of that goal. Because containers managed by Kubernetes are constantly created and destroyed, and each container is an isolated environment in itself, setting up centralized logging on your own can be a challenge. Fortunately, Kubernetes offers an integration script for the free, open-source standard for modern centralized logging: the Elasticsearch stack (ELK). But beware, Elasticsearch and Kibana may be free, but running ELK on Kubernetes is far from cheap.

Easy installation

As outlined by this Kubernetes docs article on Elasticsearch logging, the initial setup required for an Elasticsearch Kubernetes integration is actually fairly trivial. You set an environment variable, run a script, and boom you're up and running.However, this is where the fun stops.The way the Elasticsearch Kubernetes integration works is by running per-node Fluentd collection pods that send log data to Elasticsearch pods, which can then be viewed by accessing the Kibana pods. In theory, this works just fine, however, in practice, Elasticsearch requires significant effort to scale and maintain.

JVM woes

Since Elasticsearch is written in Java, it runs inside of a Java Virtual Machine (JVM), which has notoriously high resource overhead, even with properly configured garbage collection (GC). Not everyone is a JVM tuning expert. Instead of being several services distributed across multiple pods, Elasticsearch is one giant service inside of a single pod. Scaling individual containers with large resource requirements seems to defeat much of the purpose of using Kubernetes, since it is likely that Elasticsearch pods may eat up all of a given node's resources.

Elasticsearch cluster overhead

Elasticsearch's architecture requires multiple Elasticsearch masters and multiple Elasticsearch data nodes, just to be able to start accepting logs at any scale beyond deployment testing. Each of these masters and nodes all run inside a JVM and consume significant resources as a whole. If you are logging at a reasonably high volume, this overhead is inefficient inside a containerized environment and logging at high volume, in general, introduces a whole other set of issues. Ask any of our customers who've switched to us from running their own ELK.

Free ain't cheap

While we ourselves are often tantalized by the possibility of using a fire-and-forget open-source solution to solve a specific problem, properly maintaining an Elasticsearch cluster is no easy feat. Even so, we encourage you to learn more about Elasticsearch and what it has to offer, since it is, without a doubt, a very useful piece of software. However, like all software it has its nuances and pitfalls, and is therefore important to understand how they may affect your use case.Depending on your logging volume, you may want to configure your Elasticsearch cluster differently to optimize for a particular use case. Too many indices, shards, or documents all can result in different crippling and costly performance issues. On top of this, you'll need to constantly monitor Elasticsearch resource usage within your Kubernetes cluster so your other production pods don’t die because Elasticsearch decides to hog all available memory and disk resources.At some point, you have to ask yourself, is all this effort worthwhile?

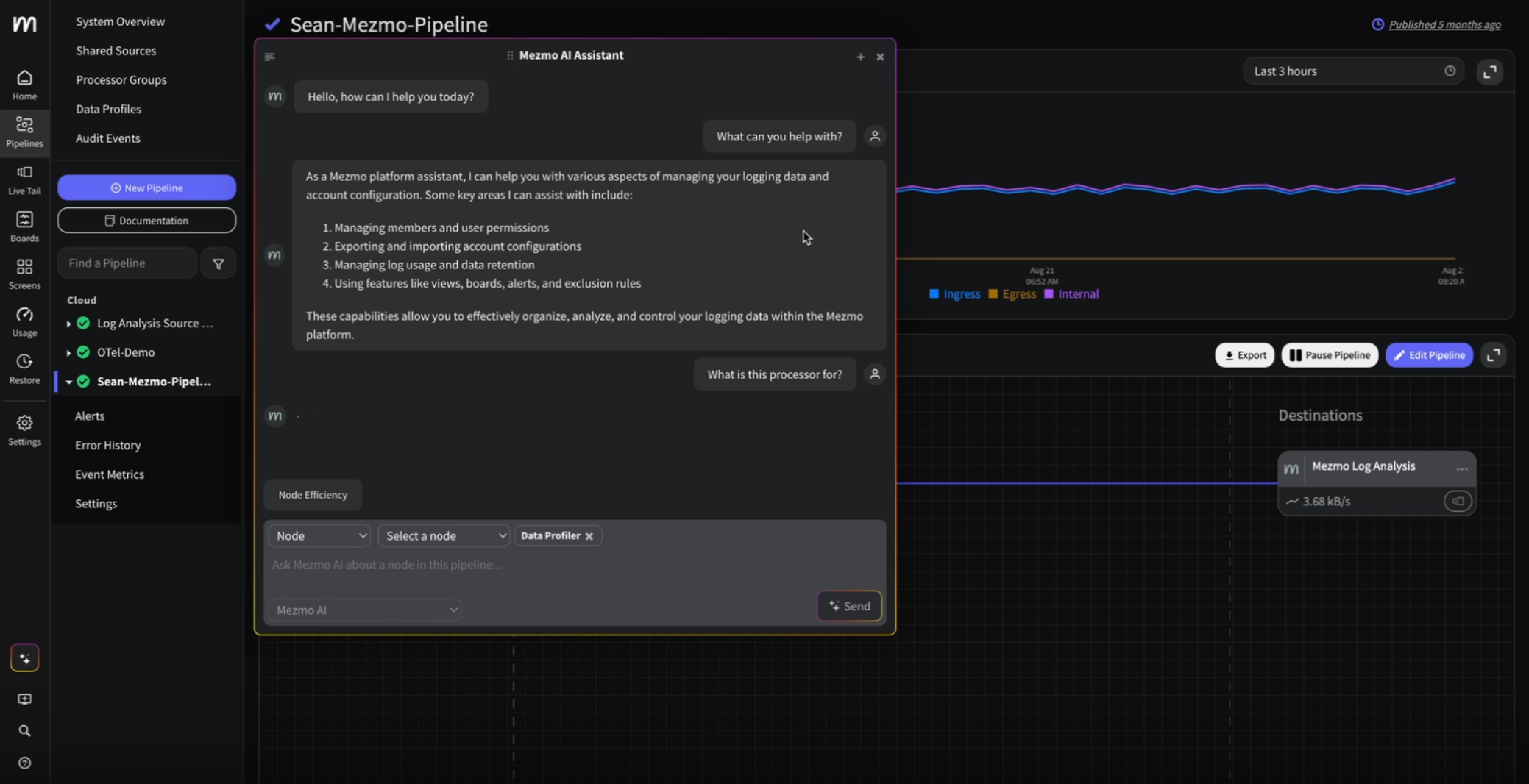

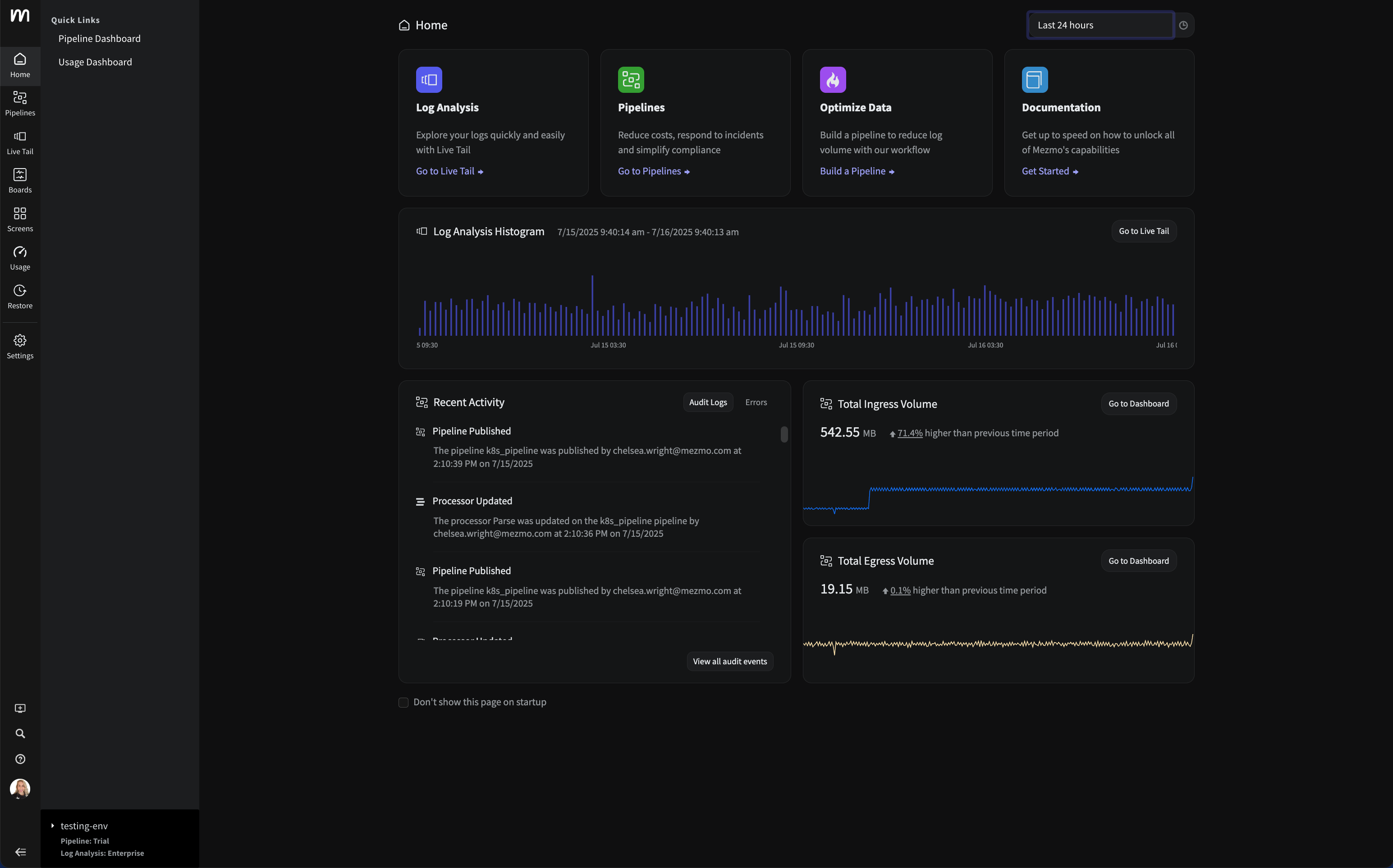

Mezmo Cloud Logging for Kubernetes

As big believers in Kubernetes, we spent a good amount of time researching and optimizing our integration. In the end, we were able to get it down to a copy-pastable set of two kubectl commands:kubectl create secret generic logdna-agent-key --from-literal=logdna-agent-key=<your-api-key-here></your-api-key-here>

kubectl create -f https://raw.githubusercontent.com/logdna/logdna-agent/master/logdna-agent-ds.yamlThis is all it takes to send your Kubernetes logs to LogDNA. No manual setting of environment variables, no editing of configuration files, no maintaining servers or fiddling with Elasticsearch knobs, just copy and paste. Once executed, you will be able to view your logs inside the LogDNA web app. And we extract all pertinent Kubernetes metadata such as pod name, container name, namespace, container id, etc. No Fluentd parsing pods required (or any other dependencies, for that matter).Easy installation is not unique to our Kubernetes integration. In fact, we strive to provide concise and convenient instructions for all of our integrations. But don't just take our word for it, you can check out all of our integrations. We also support a multitude of useful features, including alerts, JSON field search, archiving, line-by-line contextual information.All for $1.50/GB per month. We’re huge believers in pay for what you use. In many cases, we're cheaper than running your own Elasticsearch cluster on Kubernetes.For those of you not yet using LogDNA, we hope our value proposition is convincing enough to at least check out our fully featured, free 2-week trial.If you don't have a LogDNA account, you can create one on https://logdna.com or if you're on macOS w/homebrew installed:brew cask install logdna-cli

logdna register

# now paste the api key into the kubectl commands above Thanks for reading! We look forward to hearing your feedback.

.png)

.jpg)

.png)

.png)