The Benefits of Centralized Log Management and Analysis

Log centralization is kind of like brushing your teeth: everyone tells you to do it. But until you step back and think about it, you might not appreciate why doing it is so important.

If you’ve ever wondered why, exactly, teams benefit from centralized logging and analysis, keep reading. This article walks through five key advantages of log centralization for IT teams and the businesses they support.

Correlating Events Between the Application Layer and Infrastructure Layer

In general, we can break down software environments into two fundamental parts: applications and the infrastructure that hosts them.

Without centralized logging, it’s hard to know how an event in one of those layers impacts the other layer. You might parse your infrastructure logs and detect that your server has maxed out its CPU, for example. But if you analyze the application logs separately, it’s challenging to determine what impact the high CPU utilization has on applications running on the server.

Sure, you could go and compare the infrastructure and application logs manually to correlate events. But that’s inefficient, and it doesn’t scale. If you centralize all of your logs automatically and by default, you can connect events across all layers of your environment instantly and continuously.

Identifying Trends with Centralized Logging Software

How can you tell whether an event like an uptick in error rates in an application or a slowdown in response times is an isolated issue or part of a broader trend? Ideally, you’d look at all of your logs from a central location to determine whether similar events have occurred elsewhere.

You’d also look at historical log data centralized in the same place to compare current trends to historical baselines, which would provide you with additional context for distinguishing between random fluctuations and significant trends.

You can do both of these things when you centralize your logs automatically. Without centralization, it would be virtually impossible to identify trends efficiently.

Using Centralized Logs to Identify Over-Allocation of Resources

IT teams tend to spend most of their time worrying about applications that don’t have enough resources to perform well. But an equally problematic event – especially in the age of the cloud, where most companies bill resources on a pay-as-you-go basis – is when you have allocated more of them than you require. In that case, you need to scale down to avoid wasting money.

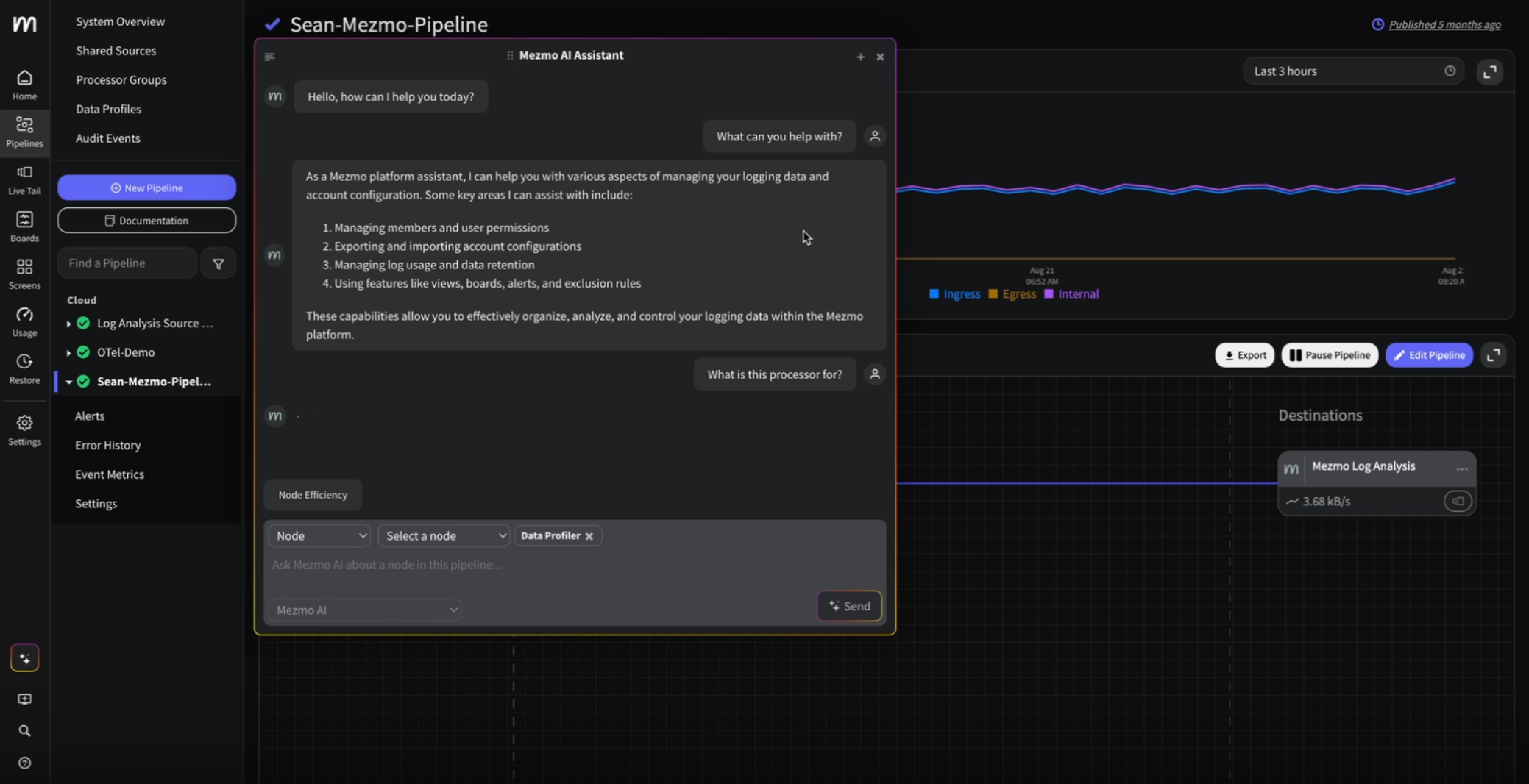

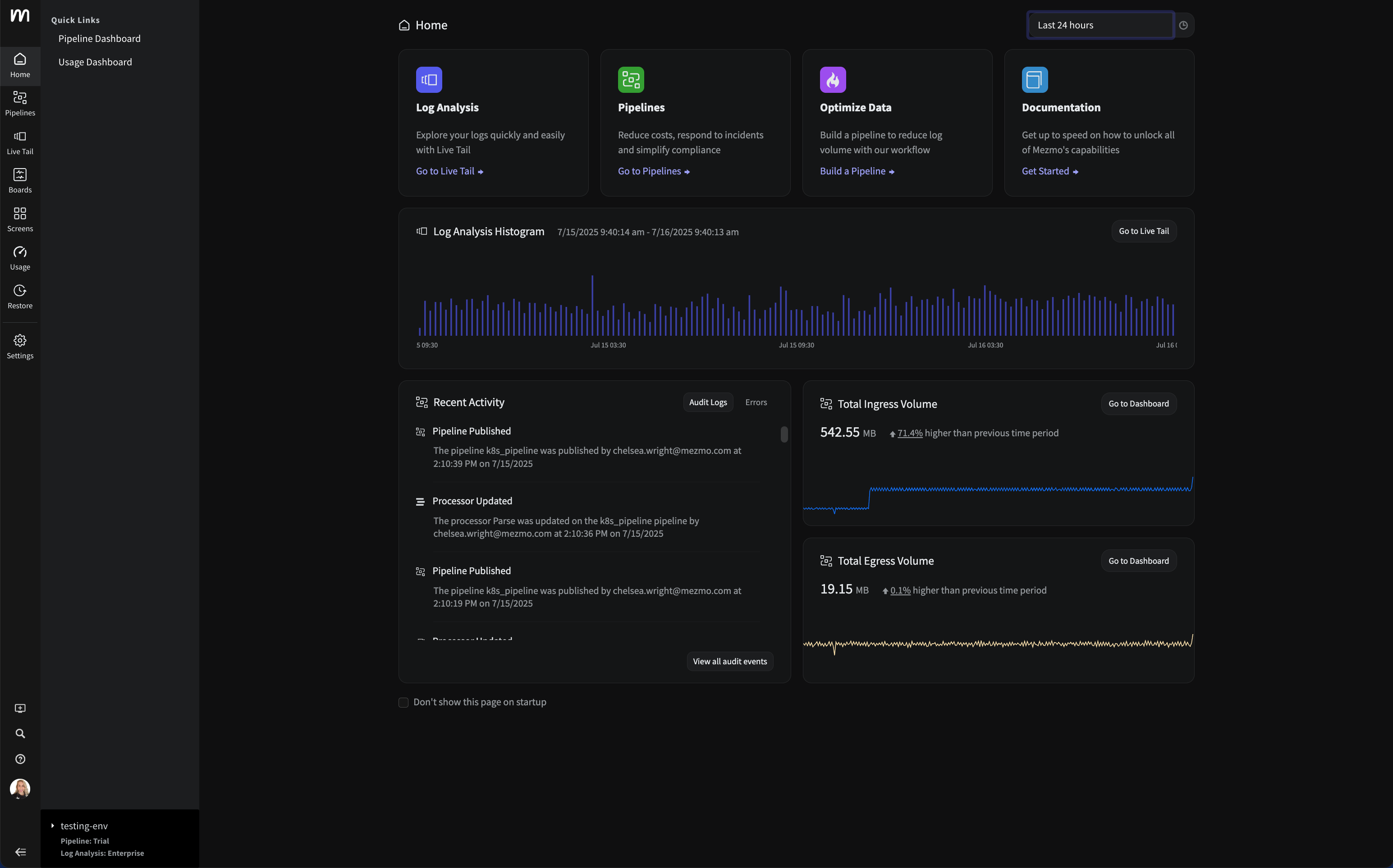

Here again, centralized logging and analysis is the key to recognizing when it’s time to scale resource allocations down. Using features like Mezmo's, formerly known as LogDNA, Kubernetes Enrichment and Presence Alerts, you can quickly view data about resource consumption alongside application performance data. You can also track resource consumption patterns over time, allowing you to safely scale back your allocations, all while continuing to follow application performance to verify that allocation changes don’t harm your applications.

Reducing MTTD and MTTR Through Centralized Log Analysis

The Mean Time to Detect (MTTD) and Mean Time to Resolve (MTTR) metrics are crucial for your customer experience. The longer it takes to find and fix problems, the less pleased your end-users will be.

When you have to toggle manually between multiple logs to confirm that an anomaly is a problem and then keep toggling as you investigate and respond to the issue, MTTD and MTTR are likely to remain high. But with centralized logging and analysis, you can interpret data and assess complex events efficiently, leading to lower MTTD and MTTR as well as happier customers.

Ensuring Consistency and Collaboration Across Teams

Last but not least, consistent, standardized log centralization can help multiple teams work together uniformly.

For example, suppose your developers centralize logs from dev/test environments in the same place where IT teams manage logs from production (while differentiating between log sources, of course). In that case, it’s easier for both teams to collaborate. Each group has visibility into what the other group sees in its part of the software delivery chain, and everyone can work toward shared goals and metrics tracked from the same centralized logs.

In an era when “breaking down silos” has assumed critical urgency for most businesses, you can’t understate the value of centralized logging as a means of driving collaboration and shared visibility.

Conclusion: The Many Benefits of Log Centralization

Log centralization is not something you do just because companies or myself told you to do it. Like brushing your teeth, it offers a range of critical benefits, albeit different ones than those associated with good oral hygiene. If you can’t manage and analyze logs from across all layers of your environment centrally and automatically, your teams will struggle to operate efficiently and create business value.

.png)

.jpg)

.png)

.png)